Why Game Mechanics Work for Security Training (and Why Most Vendors Get It Wrong)

Most gamified security training is a quiz with a badge. Real game design looks fundamentally different, and it matters for engagement and measurement.

Every security awareness vendor claims their product is gamified. Almost none of them mean what game designers mean by that word.

Gamification in the awareness training industry has become a checkbox. It means you added a quiz at the end of a module. Maybe a completion badge. The word has been so thoroughly hollowed out that it now refers to surface decoration on top of the same content delivery model that has been failing for a decade: a slideshow that employees click through once a year because compliance requires it.

That is not gamification. That is a quiz with a certificate.

I have some context on this that most security engineers do not. My undergraduate degree at Macquarie University was in IT with a game design and development specialization, so I came into security with a background in what makes interactive systems engaging and what makes them forgettable. When I look at the security awareness market, I see the same design failures that kill games before they launch.

What vendors actually ship

The typical "gamified" awareness product looks like this: a set of training modules, each followed by a multiple-choice quiz. You get a score. Maybe a completion badge. The module is marked done in your compliance dashboard. You do this once a year, or once a quarter if your organization is ambitious. There is no progression, no meaningful decision, no feedback loop, and no reason to come back.

That is the state of the art. Most products do not even have a leaderboard, let alone any of the mechanics that game designers would consider baseline. Here is what the typical vendor product looks like compared to what game designers actually build:

| Vendor "gamification" | Actual game design | |

|---|---|---|

| Points | Completion score on a quiz | Scaled by risk (confidence betting) |

| Progression | Linear (module 1, 2, 3, done) | Non-linear unlock gates tied to contribution |

| Feedback | Correct or incorrect | Forensic breakdown of why, with signal analysis |

| Competition | None, or an annual report nobody reads | Real-time 1v1 ranked matches with skill-based tiers |

| Retention | Mandatory annual retraining | Daily challenges, seasonal content, cosmetic rewards |

| Player agency | None. Click through slides. | Choose mode, bet confidence, inspect signals, compete |

The left column describes a compliance exercise with a score attached. The right column describes a game. The difference is not cosmetic. It is structural.

What game designers actually mean

Game design is a discipline with specific vocabulary and decades of research behind it. When a game designer talks about engagement, they are talking about interlocking systems that give players reasons to come back, reasons to care about their performance, and reasons to invest in the outcome. The core mechanics that real games use to sustain engagement are:

Progression loops. XP, levels, and unlocks that create a visible sense of forward movement. Not a linear track that ends, but a system where every session moves the player toward something. The key word is "visible." If the player cannot see where they are going, the progression does not work.

Risk/reward decisions. Choices where the player stakes something. A bet on their own confidence. A decision to play aggressively for higher points or conservatively for safety. The moment a player has something to lose, they pay attention differently. A quiz with no stakes produces no engagement.

Meaningful feedback. Not "correct" or "incorrect" but a breakdown of why. What signals were present? What did you miss? What would you look for next time? Feedback that teaches is feedback that creates a reason to play again, because the player knows they can do better.

Social competition. Ranked systems that update in real-time. Head-to-head matches where you can see your opponent. Leaderboards that reflect skill, not just completion. Competition is the engagement mechanic that scales best because it is self-renewing: as long as someone is ahead of you, there is a reason to play.

Content gating. Unlocking new modes as the player demonstrates competence, not just time spent. Gating creates pacing. It gives new players a clear path forward without overwhelming them, and it gives experienced players something to work toward. It also serves a structural purpose: it ensures players earn access to harder content rather than skipping to it.

These are not nice-to-have features. They are the engineering behind why people play games for hundreds of hours. When a vendor adds points to a quiz and calls it gamified, they are skipping all of this. They are decorating the surface and ignoring the structure.

Threat Terminal, mechanic by mechanic

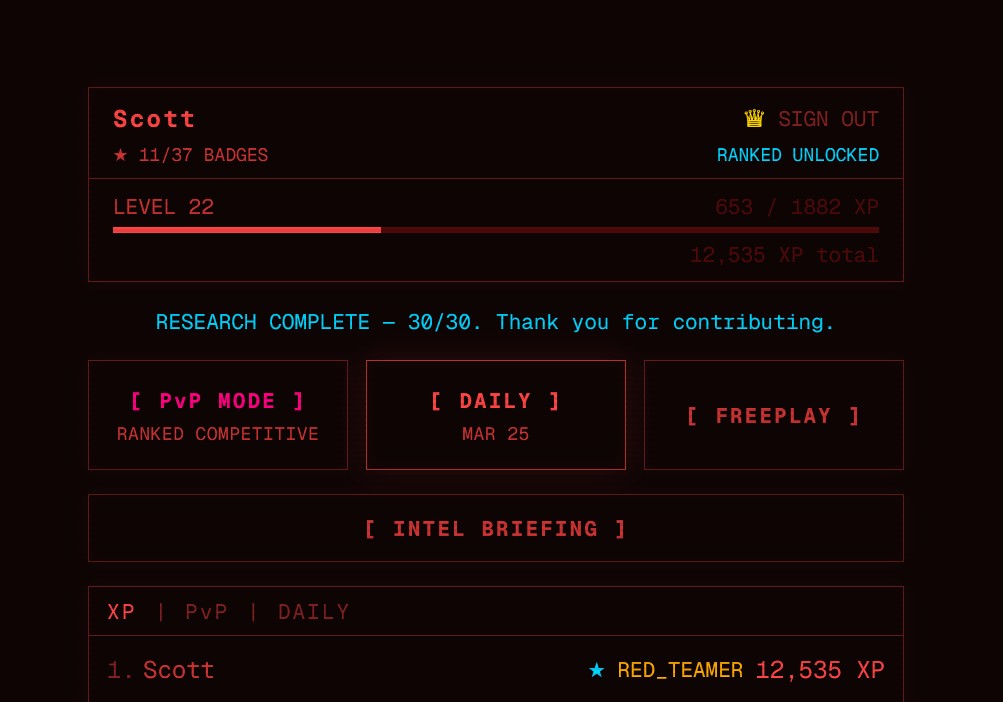

Threat Terminal is a cybersecurity research game I built where players classify AI-generated emails as phishing or legitimate. Every answer feeds a live study measuring which phishing techniques humans actually miss when writing quality is no longer a giveaway. The retro terminal aesthetic, the XP, the ranked PvP, all of it exists to get people to classify emails without it feeling like a compliance exercise.

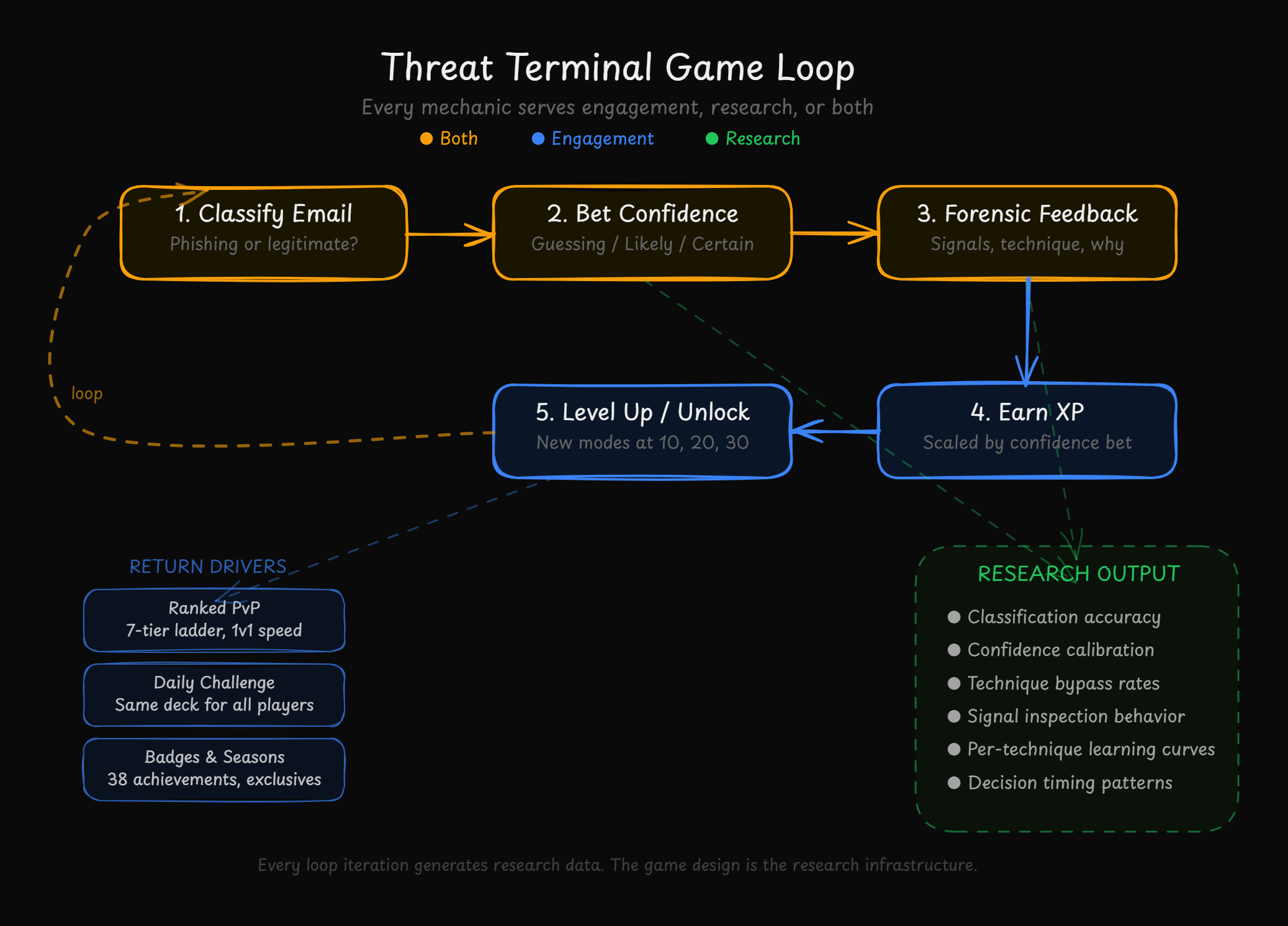

What makes it a game and not a quiz is the mechanics underneath. Every system serves two purposes: engagement (keeping players coming back) and research (producing usable data). The full loop looks like this:

Here is how each mechanic works:

| Mechanic | Engagement purpose | Research purpose |

|---|---|---|

| XP scaling by confidence | Risk/reward decision on every card | Measures calibration, not just accuracy |

| Unlock gates (10/20/30 answers) | Clear progression path for new players | Guarantees baseline research data before competition |

| Ranked PvP (7-tier ladder) | Social competition, daily return incentive | Separate competitive layer, does not contaminate study |

| Daily challenges (identical deck) | Shared experience, daily return reason | Controls for card variance across players |

| SIGINT terminal personality | Emotional investment, humor, surprise | Engagement layer only |

| Forensic signal breakdown | Teaching moment after every answer | Measures which signals players actually inspect |

| Badge system (38 badges, 5 rarities) | Collection drive, visible achievement | Tracks milestone completion rates |

| Seasonal exclusives (Season 0) | Urgency, scarcity, bragging rights | Timestamps engagement waves for cohort analysis |

The dual-purpose design is the point. Every mechanic that makes the game more engaging also makes the research better, with a few exceptions where the mechanic is purely for engagement (SIGINT, PvP) and exists because players who are having fun produce more data than players who are not.

Confidence betting as a risk/reward mechanic

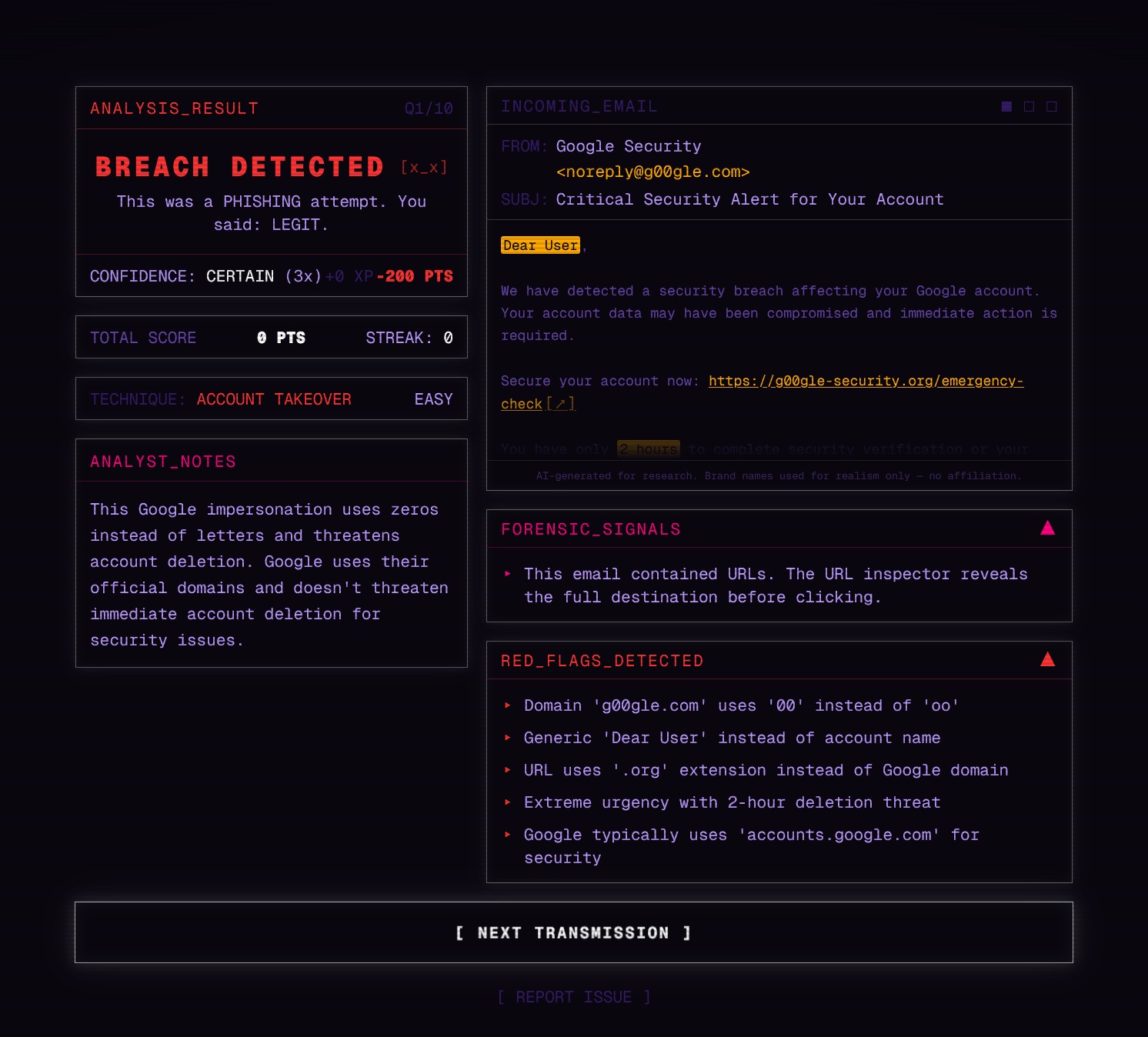

The most important mechanic from both a game design and research perspective is confidence betting. After classifying each email, players bet on how confident they are: GUESSING, LIKELY, or CERTAIN. XP scales with the bet. GUESSING pays 1x, LIKELY pays 2x, CERTAIN pays 3x. Get it wrong at CERTAIN and you lose more than you would have at GUESSING.

This is a genuine risk/reward decision. A quiz asks "is this phishing?" and awards the same points regardless of how the player felt about their answer. Confidence betting asks "how sure are you?" and puts XP on the line. That changes how players engage with the content. They read more carefully. They inspect more signals. They hesitate when they should hesitate.

From a research perspective, this mechanic produces calibration data. The early findings showed that 57% of incorrect answers were made at CERTAIN confidence. Players are not failing because they are guessing badly. They are failing while believing they are right. That finding would be invisible without the confidence mechanic.

Unlock gates as content gating

New players in Threat Terminal start with Research Mode only. After 10 research answers, PvP unlocks. After 20, Daily Challenge unlocks. After 30, Freeplay unlocks. This is content gating tied to contribution.

From a game design perspective, this gives new players a clear path forward. The home screen starts focused and expands as they play. From a research perspective, it guarantees that every player contributes a baseline of study data before accessing competitive and unranked modes. The unlock gates are why the research pipeline stays healthy even as the competitive community grows.

A vendor training product that drops all content on the user at once and hopes they complete it is ignoring this principle entirely.

Forensic feedback as a teaching loop

After every answer in Threat Terminal, players see a forensic breakdown: the technique used, the signals present, what they could have inspected to reach the right answer. URL structures, sender domain analysis, and contextual clues are surfaced explicitly.

Compare this to "Correct! The answer was phishing." One produces learning. The other produces nothing. Meaningful feedback is what creates the loop: the player sees what they missed, understands why, and applies that knowledge on the next card. That loop is the engine of both engagement and skill development.

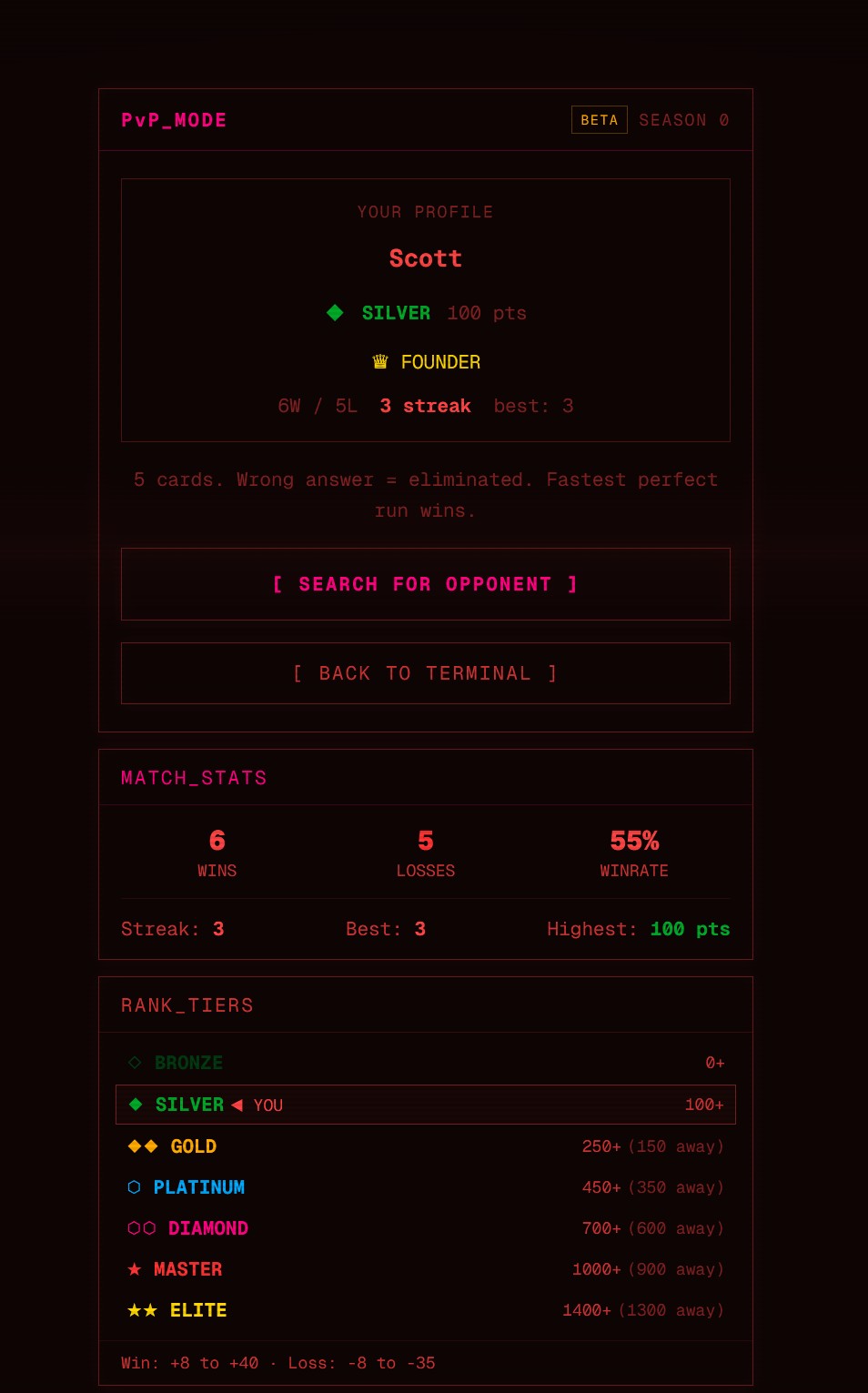

Ranked PvP as social competition

Threat Terminal v2 added real-time 1v1 ranked matches. Both players get the same five cards. Get one wrong and you are eliminated. First to finish wins. Seven ranked tiers from Bronze through Elite with skill-based point scaling.

This mechanic serves engagement only. PvP data is not included in the research dataset because the competitive context changes player behavior. But it is the single strongest return driver. Players who queue ranked matches come back the next day. Players who complete a quiz do not.

The measurement payoff

This is the part that most discussions about gamification miss entirely. Game mechanics do not just keep people playing. They create the conditions for measurement.

Traditional security awareness training produces one data point per employee per year: completion. Maybe a score. That is not data. That is a compliance checkbox.

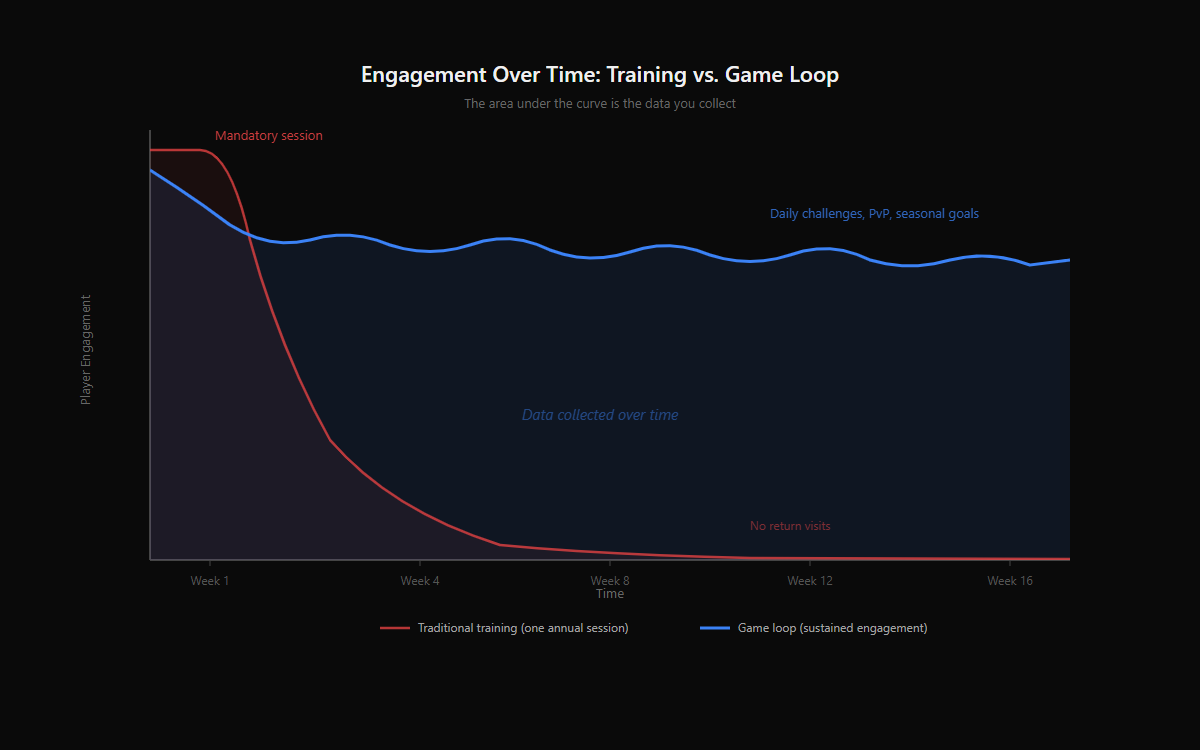

A game loop that produces return visits produces something fundamentally different:

- Without repeat engagement, you cannot measure learning effects over time. One session gives you a snapshot. Ten sessions give you a trajectory.

- Without confidence betting, you cannot measure calibration. Knowing someone got 80% correct is less useful than knowing they were certain on 60% of their mistakes.

- Without daily challenges using identical decks, you cannot control for content variance. If two players saw different questions, you cannot compare their performance meaningfully.

- Without unlock gates, a small number of power users dominate the dataset. Participation caps and gated access keep the sample representative.

The area under the curve is the data you collect. Traditional training produces a single spike and a long tail of nothing. A game loop produces sustained engagement, which means sustained data collection. The game design IS the research infrastructure.

In Threat Terminal, this has produced over 1,600 classified emails from 100+ participants across six phishing technique categories. The preliminary data is already surfacing patterns in technique-specific bypass rates and confidence calibration. None of that would exist without the game mechanics that keep players coming back.

What this means for security programs

If your awareness program's engagement data shows one session per employee per year, the problem is not the content. It is the design. You are measuring compliance, not capability.

Game mechanics are not a feature request for your awareness vendor. They are the difference between training that produces measurement and training that produces a checkbox. The bar for "gamified" should be higher than a quiz with points. It should mean: does this system give people a reason to come back? Does it produce data you can act on? Does it create a feedback loop that builds skill over time?

Most of what the industry calls gamified fails all three.

The techniques exist. The design principles are well understood. They have been used in games for decades to sustain engagement across millions of users. Applying them to security training is not speculative. It is just underexplored, because the people building these products come from compliance backgrounds, not design backgrounds.

That gap is where the opportunity is.

Play Threat Terminal to see these mechanics in action, or read the research findings from 100+ participants.

More posts

The Action Pause: A 10-Second Habit for AI-Era Impersonation

A behavioral framework for AI-era impersonation across email, voice, video, chat, and in-person asks. Empirically grounded in 2,511 classifications from the Threat Terminal study. Trigger on the action request, not on the content.

Preliminary Findings: How Humans Detect AI-Generated Phishing Across 2,511 Classifications

Findings from 153 participants classifying AI-generated phishing: technique-level bypass rates, overconfidence patterns, and what security training misses.

Stay in the loop

I write about the security topics that interest me: IAM, cloud security, automation, threat intelligence, phishing, and incident response. If this was useful, there is more where it came from.