How Cybersecurity Teams Actually Use AI: What I Told Students at PBSC

I spoke at Palm Beach State College's Cybersecurity Symposium on how security teams use AI in practice and what students can do right now to prepare.

Last week I spoke at Palm Beach State College's Cybersecurity Symposium. The talk was titled "Cybersecurity Teams & The Power of Using AI," but the real question driving it was simpler: what does AI actually look like in a security operations workflow, and what should students be doing about it right now?

The room was full of cybersecurity students, many of them part of the college's Ethical Hackers Club. The energy was great. These are students who are already building things and competing in CTFs, so I wanted to give them something practical, not theoretical.

The Elephant in the Room

I opened with the question everyone was thinking: "Is AI going to take my cybersecurity job?"

The short answer is no, not yet. But that framing misses the point. AI is not replacing analysts. It is replacing workflows. The people who learn to work with these tools will outperform everyone who does not. That is already happening on my team.

How My Team Actually Uses AI

I walked through six real ways my team at H.I.G. Capital uses AI every day:

- Draft detection rules. AI proposes. We validate.

- Summarize log noise. 10,000 events condensed to three sentences.

- Accelerate scripting. Faster scripts, but never blindly trusted.

- Speed up reporting. AI drafts. We fact-check.

- Research faster. Threat intel synthesis, always cross-referenced.

- Assist, not decide. AI co-pilots. Humans decide.

The thread through all of it: we interact with AI constantly, but we never trust it blindly. Every output gets validated. That distinction matters.

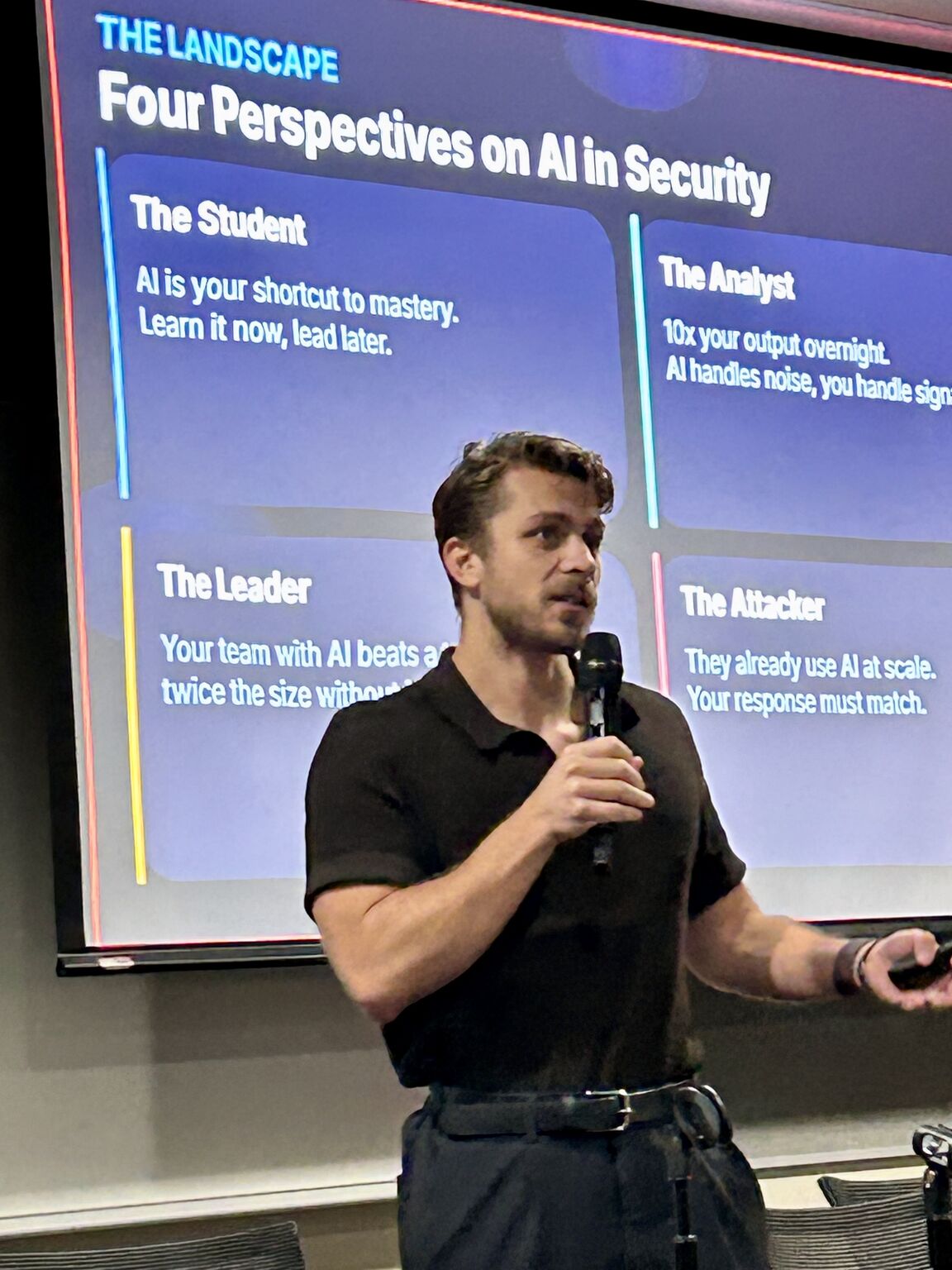

Four Perspectives on AI in Security

I framed the landscape through four lenses:

The Student: AI is your shortcut to mastery. Learn it now, lead later.

The Analyst: 10x your output overnight. AI handles noise, you handle signal.

The Leader: Your team with AI beats a team twice the size without it.

The Attacker: They already use AI at scale. Your response must match.

Each perspective reinforces the same conclusion. AI is a force multiplier, and the gap between those who use it and those who do not is widening fast.

The Gap Is Your Advantage

I was honest about where the industry is right now. AI coding tools are powerful, but one misconfiguration exposes everything. Leadership at many organizations has not caught up. A lot of engineers are coasting on traditional methods while attackers scale with AI.

That gap is exactly where students can step in. With tools like Claude Code, Cursor, and ChatGPT, you can build whatever you want. I built Enterprise-Zapp, an open-source tool that audits cloud apps and generates security health reports. That used to take months. AI made it possible in days.

Security has a massive talent gap. The people who learn AI now will fill it.

Myths I Addressed

A few things I hear constantly that needed correcting:

"Just learn prompt engineering." If you do not understand the problem, you cannot evaluate the answer. Fundamentals come first.

"AI will take my job." Every new AI capability is a new attack vector. The industry needs more defenders, not fewer.

"I don't need fundamentals." AI hallucinates confidently. I have watched leaders propose AI-generated ideas that were completely wrong. You need to know enough to catch it.

"AI is only for big teams." Small teams gain the most from AI force-multiplication.

What Students Should Do Right Now

I closed with five action items:

- Master networking, identity, and cloud fundamentals.

- Learn to read and analyze logs before automating them.

- Always validate what AI gives you. Question everything.

- Use AI to accelerate your thinking, not replace it.

- Build projects. Ship something. Show, do not just tell.

I also shared a list of project ideas: an AI phishing detector, a log analysis dashboard, a detection rule generator, an automated CTF solver, a vulnerability scanner, and an incident response bot. Pick one. Build it in a weekend. Put it on your resume.

Threat Terminal Demo

I wrapped up by showing Threat Terminal, the gamified phishing detection research platform I built. Students in the room played it live, and their results contributed directly to the study. Most people cannot reliably spot AI-generated phishing, and the data continues to prove it. The preliminary empirical findings from 153 participants are now published on Zenodo.

Thank You, PBSC

This was my second time speaking at Palm Beach State College, having previously presented a career roadmap at their CyberWeek Conference in October 2025. The cybersecurity program there is doing real work. The students are engaged, the faculty care, and the Ethical Hackers Club is building exactly the kind of hands-on culture that produces strong security professionals.

If you are a student reading this: the window is open right now. AI will not replace you, but someone who uses AI well will. Be curious, build things, and stay skeptical.

More posts

Breaking Into Cybersecurity: A Roadmap for Students

Cybersecurity is not truly entry-level without serious effort outside the classroom. Here is a nine-step roadmap I wish someone had handed me.

The Action Pause: A 10-Second Habit for AI-Era Impersonation

A behavioral framework for AI-era impersonation across email, voice, video, chat, and in-person asks. Empirically grounded in 2,511 classifications from the Threat Terminal study. Trigger on the action request, not on the content.

Stay in the loop

I write about the security topics that interest me: IAM, cloud security, automation, threat intelligence, phishing, and incident response. If this was useful, there is more where it came from.